The visual apocalypse is probably nigh, but perhaps seeing was never believing.

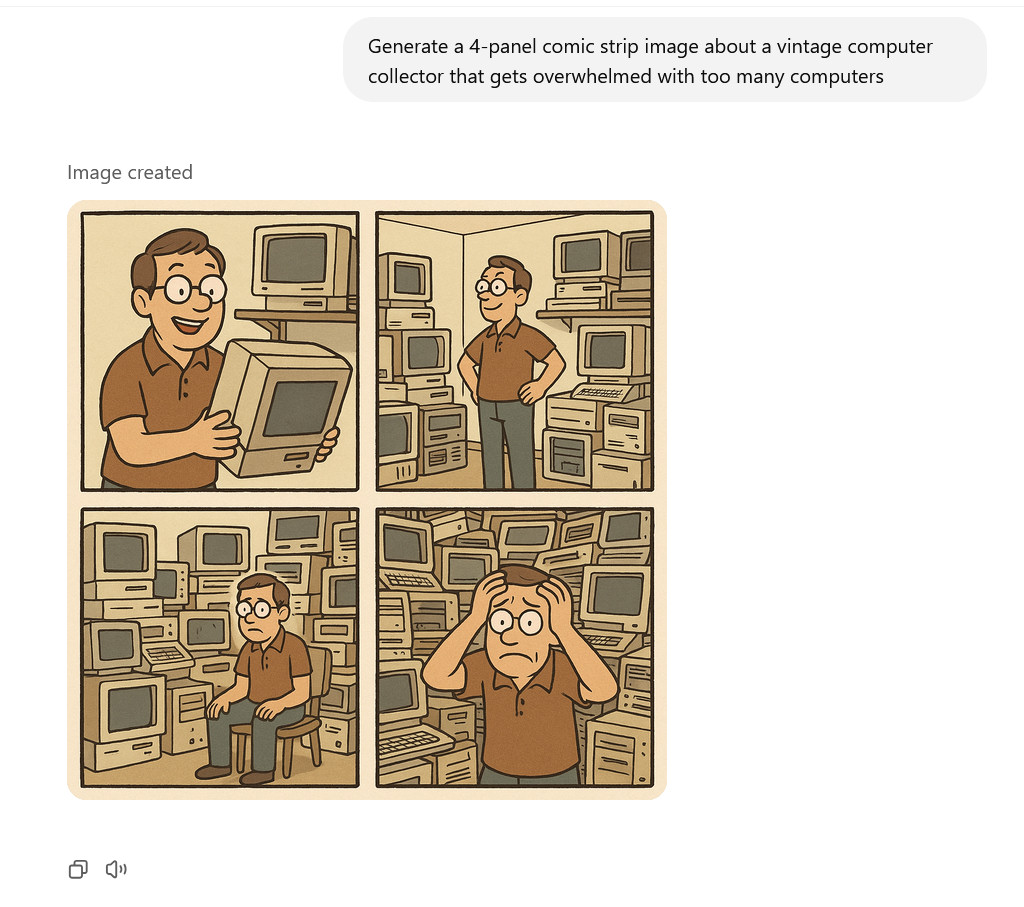

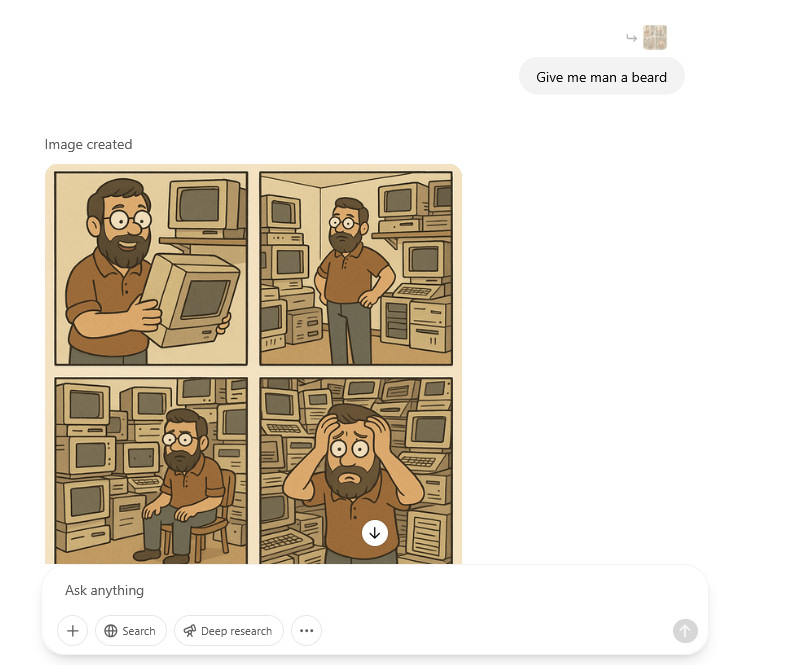

A trio of AI-generated images created using OpenAI's 4o Image Generation model in ChatGPT. Credit: OpenAI

The arrival of OpenAI's DALL-E 2 in the spring of 2022 marked a turning point in AI, when text-to-image generation suddenly became accessible to a select group of users, creating a community of digital explorers who experienced wonder and controversy as the technology automated the act of visual creation.

But like many early AI systems, DALL-E 2 struggled with consistent text rendering, often producing garbled words and phrases within images. It also had limitations in following complex prompts with multiple elements, sometimes missing key details or misinterpreting instructions. These shortcomings left room for improvement that OpenAI would address in subsequent iterations, such as DALL-E 3 in 2023.

On Tuesday, OpenAI announced new multimodal image-generation capabilities that are directly integrated into its GPT-4o AI language model, making it the default image generator within the ChatGPT interface. The integration, called "4o Image Generation" (which we'll call "4o IG" for short), allows the model to follow prompts more accurately (with better text rendering than DALL-E 3) and respond to chat context for image modification instructions.

An AI-generated cat in a car drinking a can of beer created by OpenAI's 4o Image Generation model. Credit:OpenAI

An AI-generated photo of Abraham Lincoln holding an Ars Technica sign created by OpenAI's 4o Image Generation model. Credit: OpenAI

An AI-generated image of "a muscular barbarian with weapons beside a CRT television set, cinematic, 8K, studio lighting" created by OpenAI's 4o Image Generation model. Credit: OpenAI

The new image-generation feature began rolling out Tuesday to ChatGPT Free, Plus, Pro, and Team users, with Enterprise and Education access coming later. The capability is also available within OpenAI's Sora video-generation tool. OpenAI told Ars that the image generation when GPT-4.5 is selected calls upon the same 4o-based image-generation model as when GPT-4o is selected in the ChatGPT interface.

Like DALL-E 2 before it, 4o IG is bound to provoke debate as it enables sophisticated media manipulation capabilities that were once the domain of sci-fi and skilled human creators into an accessible AI tool that people can use through simple text prompts. It will also likely ignite a new round of controversy over artistic styles and copyright—but more on that below.

Some users on social media initially reported confusion since there's no UI indication of which image generator is active, but you'll know it's the new model if the generation is ultra slow and proceeds from top to bottom. The previous DALL-E model remains available through a dedicated "DALL-E GPT" interface, while API access to GPT-4o image generation is expected within weeks.

4o IG represents a shift to "native multimodal image generation," where the large language model processes and outputs image data directly as tokens. That's a big deal, because it means image tokens and text tokens share the same neural network. It leads to new flexibility in image creation and modification.

Despite baking-in multimodal image generation capabilities when GPT-4o launched in May 2024—when the "o" in GPT-4o was touted as standing for "omni" to highlight its ability to both understand and generate text, images, and audio—OpenAI has taken over 10 months to deliver the functionality to users, despite OpenAI president Greg Brock teasing the feature on X last year.

OpenAI was likely goaded by the release of Google's multimodal LLM-based image generator called "Gemini 2.0 Flash (Image Generation) Experimental," last week. The tech giants continue their AI arms race, with each attempting to one-up the other.

And perhaps we know why OpenAI waited: At a reasonable resolution and level of detail, the new 4o IG process is extremely slow, taking anywhere from 30 seconds to one minute (or longer) for each image.

Even if it's slow (for now), the ability to generate images using a purely autoregressive approach is arguably a major leap for OpenAI due to its flexibility. But it's also very compute-intensive, since the model generates the image token by token, building it sequentially. This contrasts with diffusion-based methods like DALL-E 3, which start with random noise and gradually refine an entire image over many iterative steps.

In a blog post, OpenAI positions 4o Image Generation as moving beyond generating "surreal, breathtaking scenes" seen with earlier AI image generators and toward creating "workhorse imagery" like logos and diagrams used for communication.

The company particularly notes improved text rendering within images, a capability where previous text-to-image models often spectacularly failed, frequently turning "Happy Birthday" into something resembling alien hieroglyphics.

Source: https://arstechnica.com/ai/2025/03/openais-new-ai-image-generator-is-potent-and-bound-to-provoke/